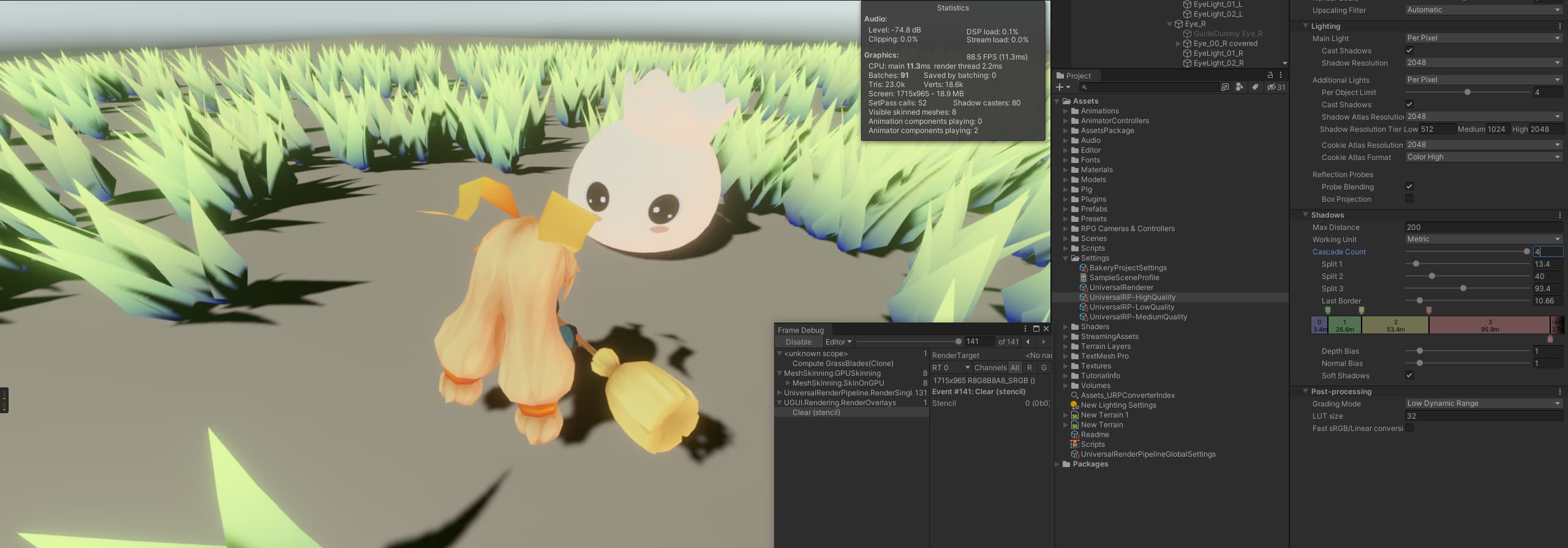

简介

简单记录下,URP 中 Lit 如何实现光照计算

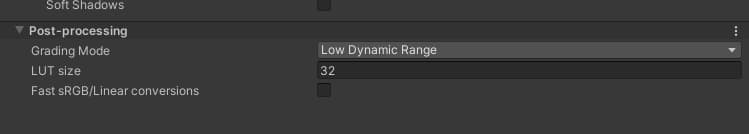

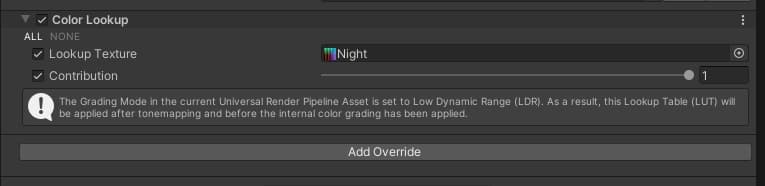

后处理

Boom

抗锯齿

雾效

LUT

Color Lookup组件

ColorLookup.cs

1 |

|

ApplyColorGrading

1 |

|

贴花

屏幕空间贴花

UnityURPUnlitScreenSpaceDecalShader

LBW-WallMerge

原理

通过摄像机到模型顶点发射射线,射线的长度再乘一个屏幕深度图的系数,使得射线的重点最后贴着场景模型表面分布。最后将射线结果作为新的坐标,以新坐标作为decalSpace取xz轴作为uv方向,采样贴图

1 |

|

BackedGI

1 | inputData.bakedGI = SAMPLE_GI(input.lightmapUV, input.vertexSH, inputData.normalWS); |

SAMPLE_GI 作为宏定义,将根据是否存在光照贴图,决定是采样光照贴图、还是全局光照

1 |

|

SampleLightmap

1 | // Sample baked lightmap. Non-Direction and Directional if available. |

SampleSHPixel

1 |

|

光照贴图烘培

全局光照

1 | half3 color = GlobalIllumination( |

GI中的反射结果

采样天空盒和反射探针结果

开启反射探针

关闭反射探针

主光源结果

1 | color += LightingPhysicallyBased(brdfData, brdfDataClearCoat, |

点光源结果

1 |

|

LightingPhysicallyBased(BRDF)

1 | half3 LightingPhysicallyBased(BRDFData brdfData, BRDFData brdfDataClearCoat, |

应用雾效

1 | color.rgb = MixFog(color.rgb, inputData.fogCoord); |

高度雾

1 | float3 _FogColor; |

实时GI

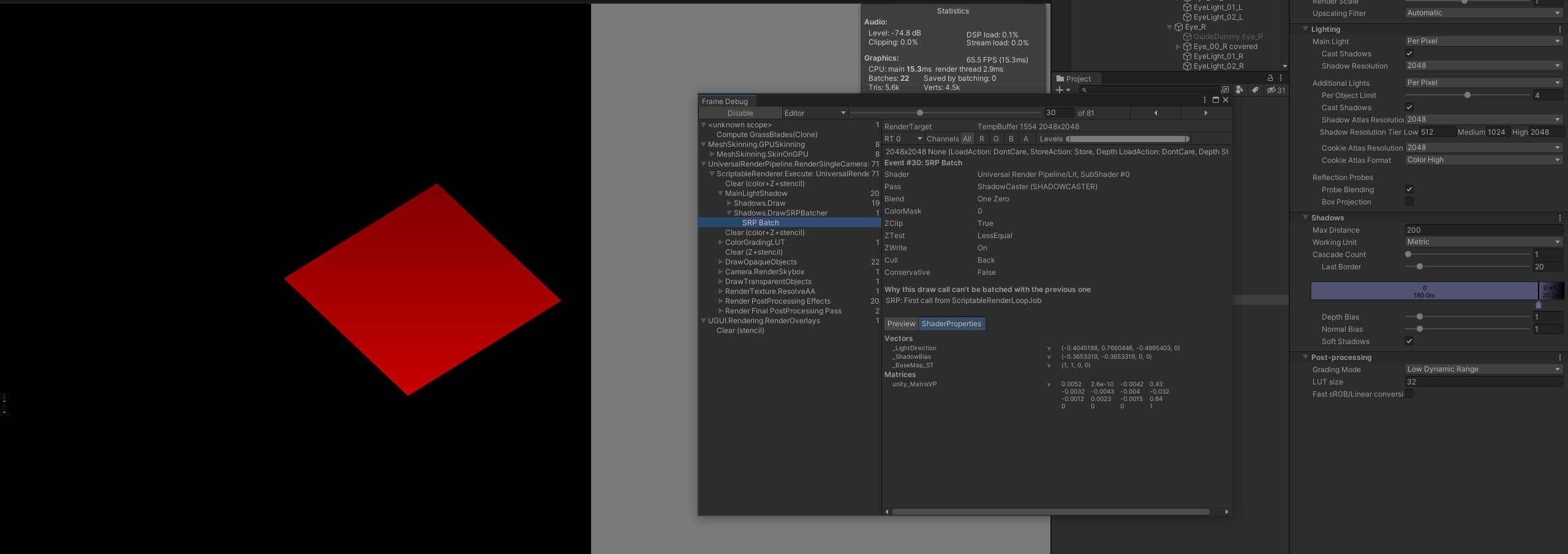

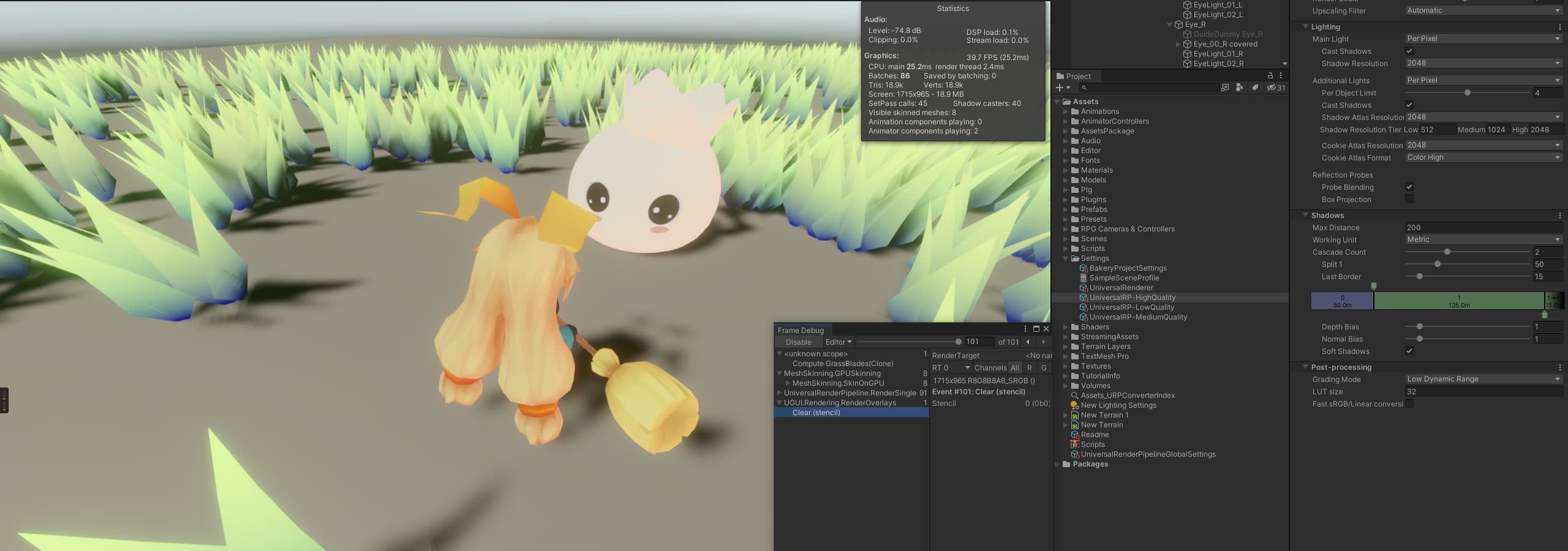

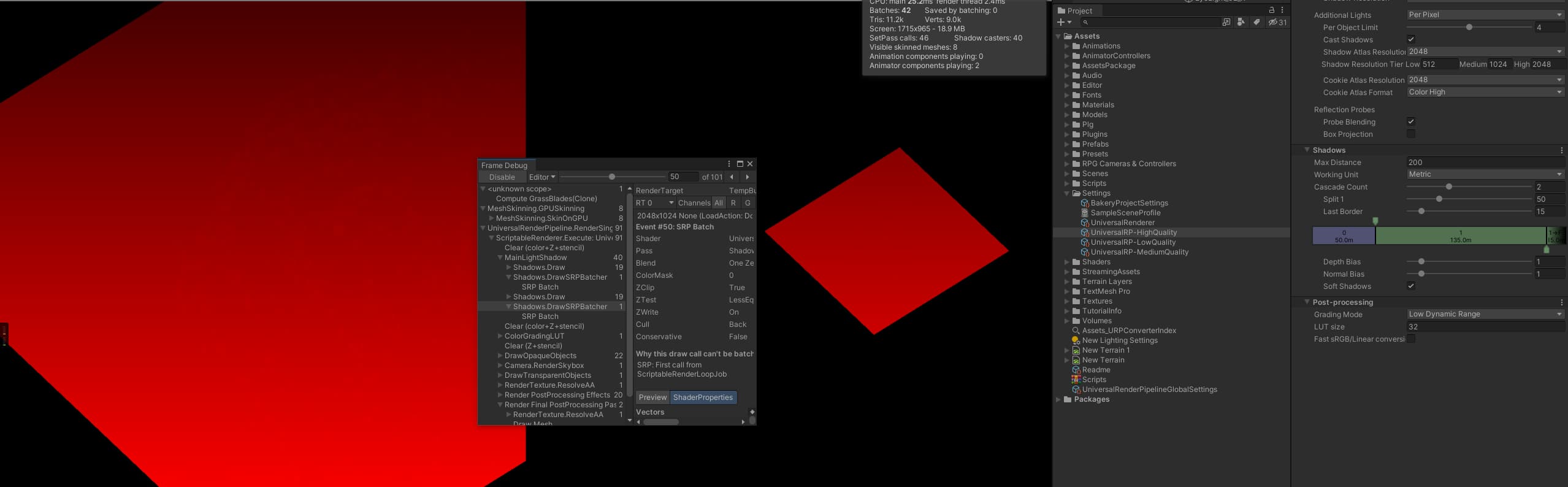

阴影

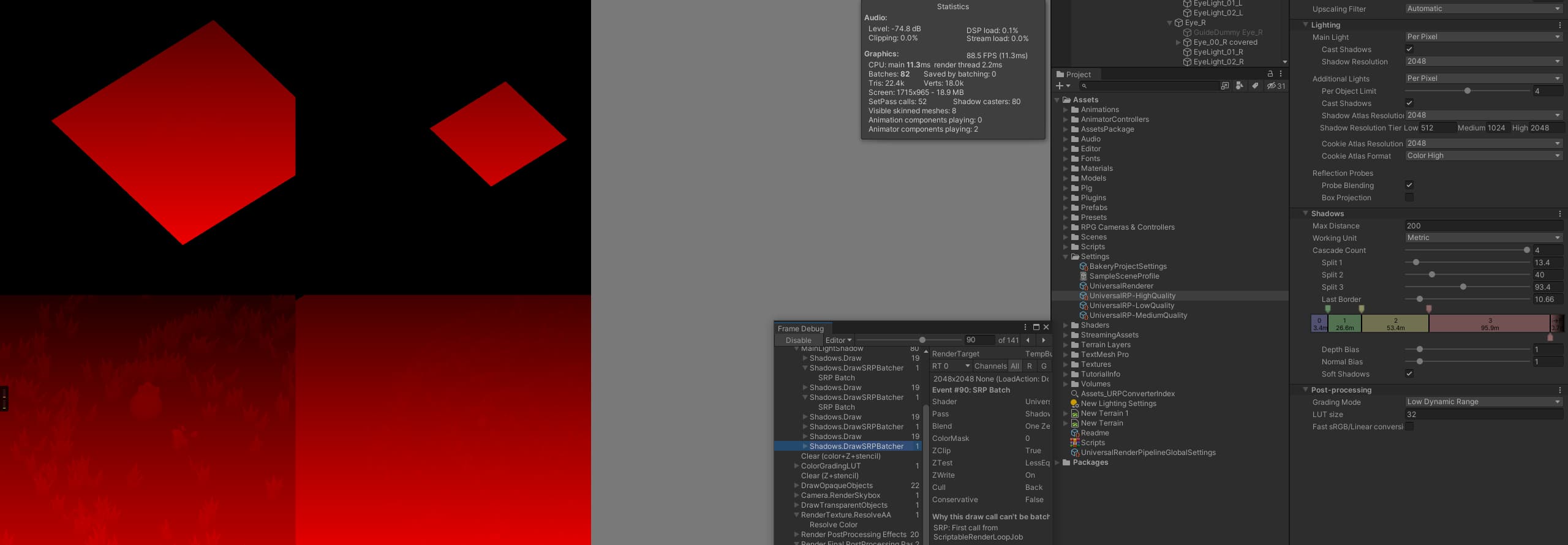

级联阴影

一级阴影

二级阴影

四级阴影

不同等级之间会有一个很明显的直线,可以使用 Dither Cull去做过渡

BXRP Shadow

1 | int cascadeIndex; |

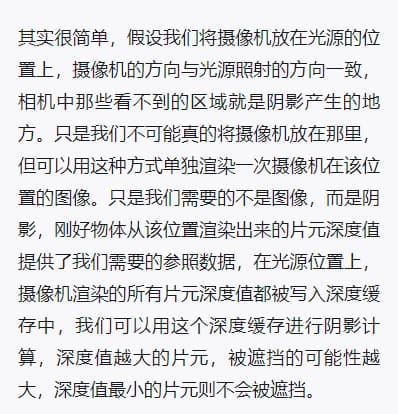

阴影映射纹理

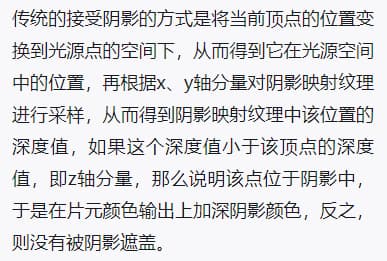

接受阴影的计算

屏幕空间阴影投影技术